Many organizations, governments, and institutions have developed codes of AI ethics to guide the ethical development and deployment of artificial intelligence (AI). AlgorithmWatch provides a comprehensive inventory of codes of AI Ethics worldwide.

These codes build on top of a set of principles based on foundation values that help articulate policies that companies and organizations should observe to control the ethical, societal, and practical implications of developing and deploying AI Systems.

While these codes share overlapping values, their interpretations vary based on context. Global frameworks generally take a broader, societal view, emphasizing human rights, cultural diversity, and sustainability. Corporate frameworks are often pragmatic and business-oriented, prioritizing innovation, compliance, and market-driven outcomes.

For example, when discussing human dignity, Global Frameworks (UNESCO, OECD) emphasize universal human rights and cultural diversity, ensuring AI respects individuals’ intrinsic worth across different societies. Conversely, Corporate Frameworks (Google, Microsoft) focus on designing AI systems that empower users and enhance user experience while respecting individual autonomy.

Regarding transparency, global frameworks advocate for complete explainability to all stakeholders, including non-technical users, emphasizing societal trust in AI. Corporate frameworks focus more on practical issues, such as providing sufficient information for regulators, developers, and end-users, and add the need to preserve intellectual property or proprietary technology.

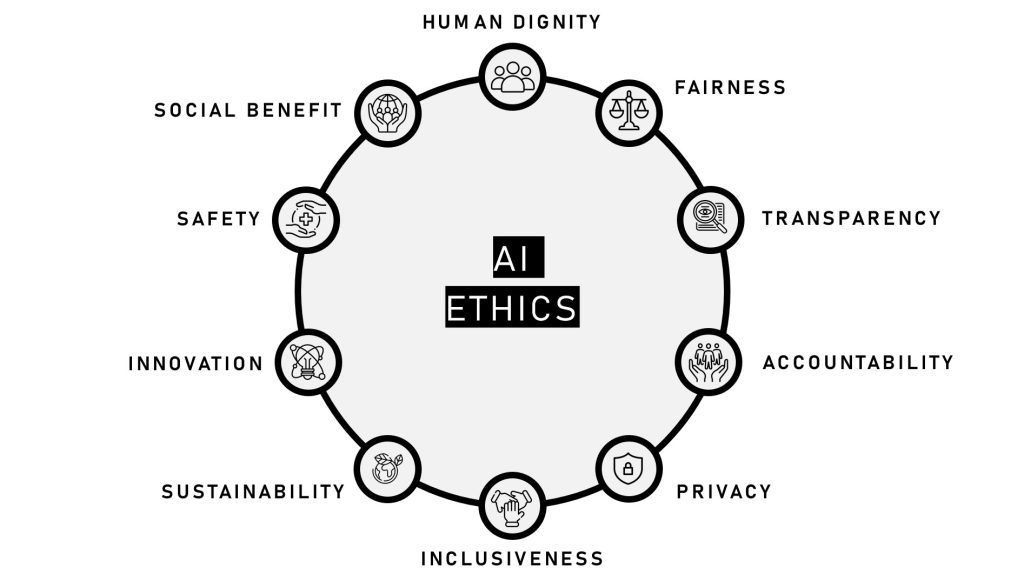

Human Dignity: Recognizing and respecting human rights, autonomy, and intrinsic worth.

Fairness: Ensuring equality, non-discrimination, and mitigation of biases in AI systems.

Transparency: Emphasizing explainability and clarity in AI decision-making processes.

Accountability: Assigning responsibility for AI actions and their societal impacts.

Privacy: Protecting individual data and upholding security measures.

Inclusiveness: Promoting access, diversity, and empowerment for all users.

Sustainability: Supporting environmental and societal well-being alongside technological growth.

Innovation: Encouraging creativity and advancement in AI technologies while maintaining ethical boundaries.

Safety: Ensuring reliable, secure, and harm-free operation of AI systems.

Social Benefit: Maximizing positive impacts on individuals and society as a whole.

About Santiago Andrés Azcoitia

- Web |

- More Posts(9)