New paper published: “Visual Parking Occupancy Detection Using Extended Contextual Image Information Via a Multi-Branch Output ConvNeXt Network”

With the development of society, transportation has become a crucial aspect of our daily lives, resulting in a significant increase in the number of vehicles on the roads. As a result, finding available parking slots in metropolitan areas can be a daunting task, leading to a higher risk of accidents and carbon emissions, and negatively impacting the driver’s health. In this context, technological solutions for parking management and real-time monitoring have become crucial to streamline the parking process in urban areas.

This study proposes a new computer vision-based system that utilizes color imagery processed by an innovative deep learning algorithm to identify vacant parking spaces in challenging scenarios. The system utilizes a multi-branch output neural network that maximizes contextual image information to determine the occupancy of each parking space. Unlike existing approaches that only use a neighborhood around each slot, every output infers the occupancy of a specific parking space using all the input image information. This approach makes the system very robust to changes in illumination conditions, various camera perspectives, and mutual occlusions between parked cars.

An extensive evaluation of the proposed system has been conducted using several public datasets, which has shown that the system outperforms existing approaches. The innovative approach of this system can significantly improve the parking experience for drivers, reduce the risk of accidents and carbon emissions, and positively impact the overall health and well-being of urban communities.

Read full paper in [https://www.mdpi.com/1424-8220/23/6/3329/htm]

New paper published: “Solving Routing Problems for Multiple Cooperative Unmanned Aerial Vehicles using Transformer Networks”

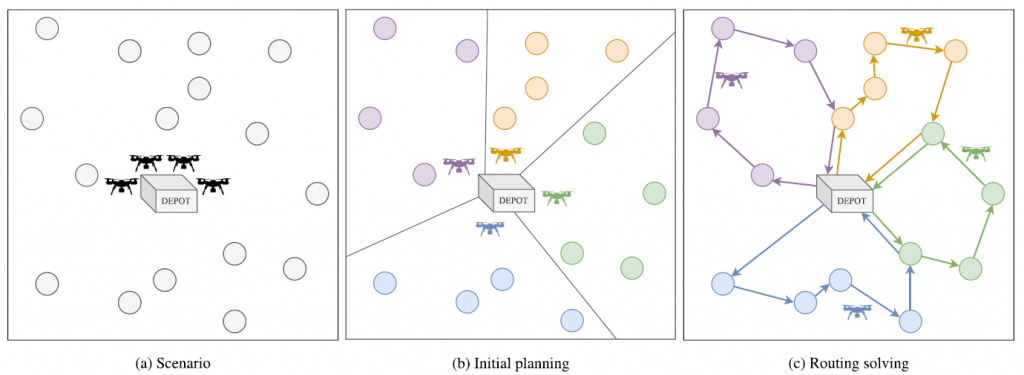

Missions involving Unmanned Aerial Vehicles (UAVs) typically require the UAVs to travel to specific regions, perform designated tasks, and return to a predetermined base once all regions have been covered or when the UAVs’ batteries run low. Planning an efficient route is crucial for achieving optimal results, particularly when coordinating a team of UAVs. This paper presents a system that manages multiple cooperative UAVs by dividing the routing problem into two stages: initial planning and routing solving. During the initial planning stage, the system groups the regions to be visited into multiple clusters based on distance and assigns each cluster to a different UAV. In the routing solving stage, the system calculates the best route for each UAV, taking into account the clusters assigned during the initial planning stage and a variant of the Orienteering Problem that includes the concept of shared regions. This enables the UAVs to visit regions from other clusters, compensating for any suboptimal region clustering from the previous stage. The Orienteering Problem with shared regions is solved using the deep learning architecture Transformer and a deep reinforcement learning framework, which provides high-quality solutions faster than conventional optimization approaches. The system’s benefits are demonstrated through extensive results and comparisons with other Combinatorial Optimization algorithms in cooperative and non-cooperative scenarios.

Read full paper in [https://authors.elsevier.com/sd/article/S0952197623002695]

New publication “A Novel System for Nighttime Vehicle Detection Based on …”

We are pleased to announce our new publication “A Novel System for Nighttime Vehicle Detection Based on Foveal Classifiers with Real-Time Performance” in IEEE Trans. Intelligent Transportation Systems.

See vídeos in [Link].

Check the database in [Link].

Multi-UAV online mission planning

An online mission planning system based on deep reinforcement learning and graph neural networks has been developed, which is capable of managing the routes of a fleet of UAVs in real time and in an optimal way to achieve visiting as many locations as possible with a region-sharing strategy. The cooperation strategy is specially designed for non-communications environments among the UAVs (or radio silence) during the mission execution. [More info]

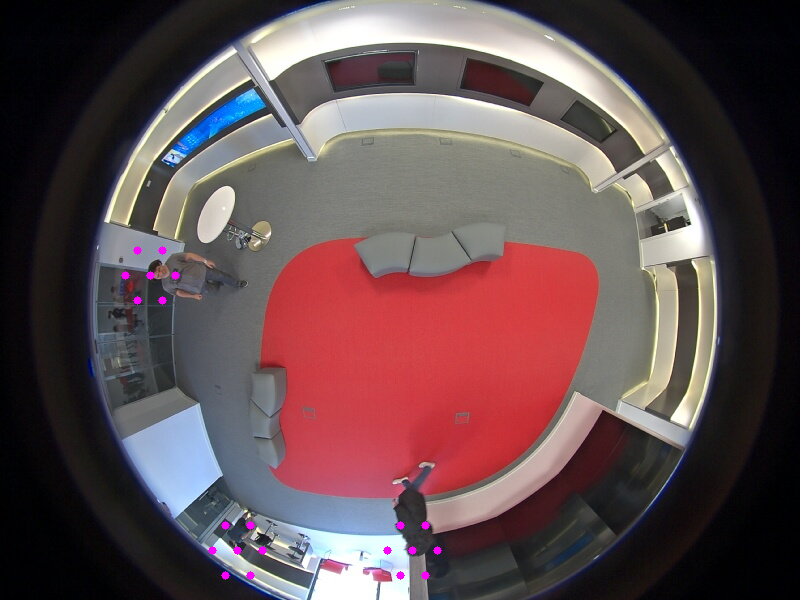

New publication “Robust people indoor localization with omnidirectional…”

Our paper Robust people indoor localization with omnidirectional cameras using a Grid of Spatial-Aware Classifiers has been accepted in Signal Processing: Image Communication.

See also our database PIROPO.

New database for parking lot occupancy

See [here] our new published database for estimating parking lot occupancy using color cameras in a complex environment (day and night, perspective occlusions, background occlusions, etc.). Using this database, we have trained our system ParkingNet. See the vídeo demo:

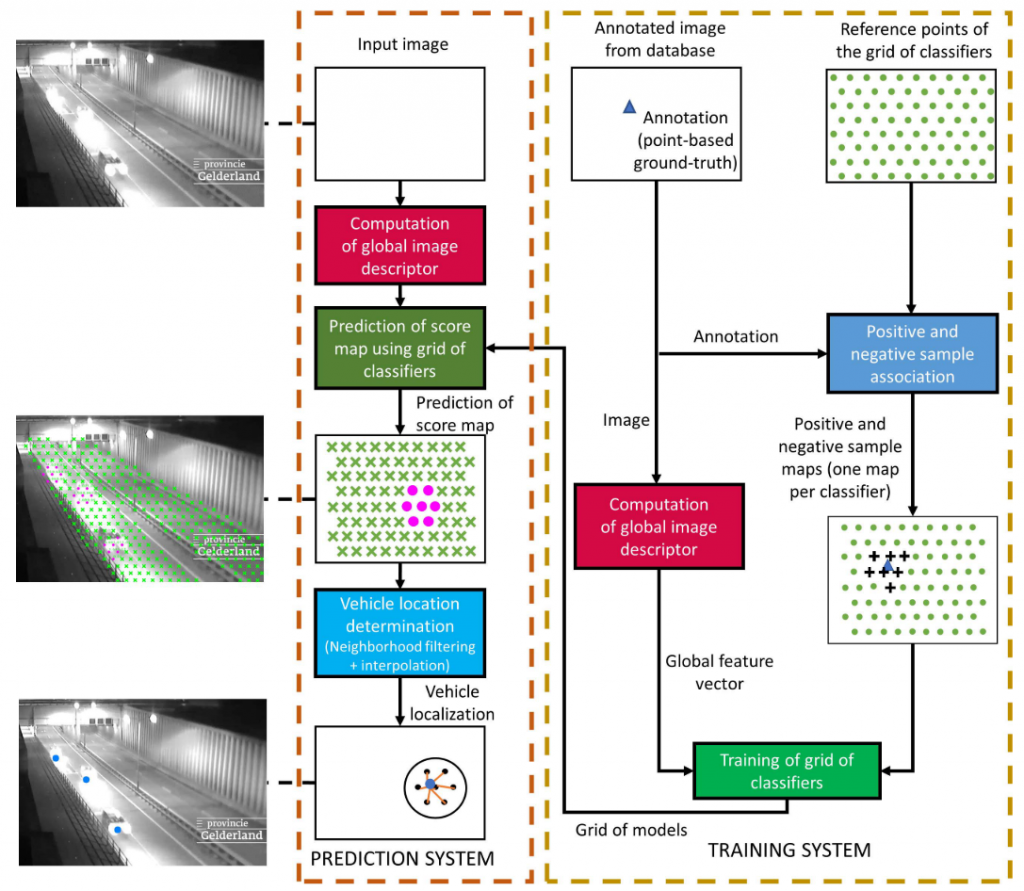

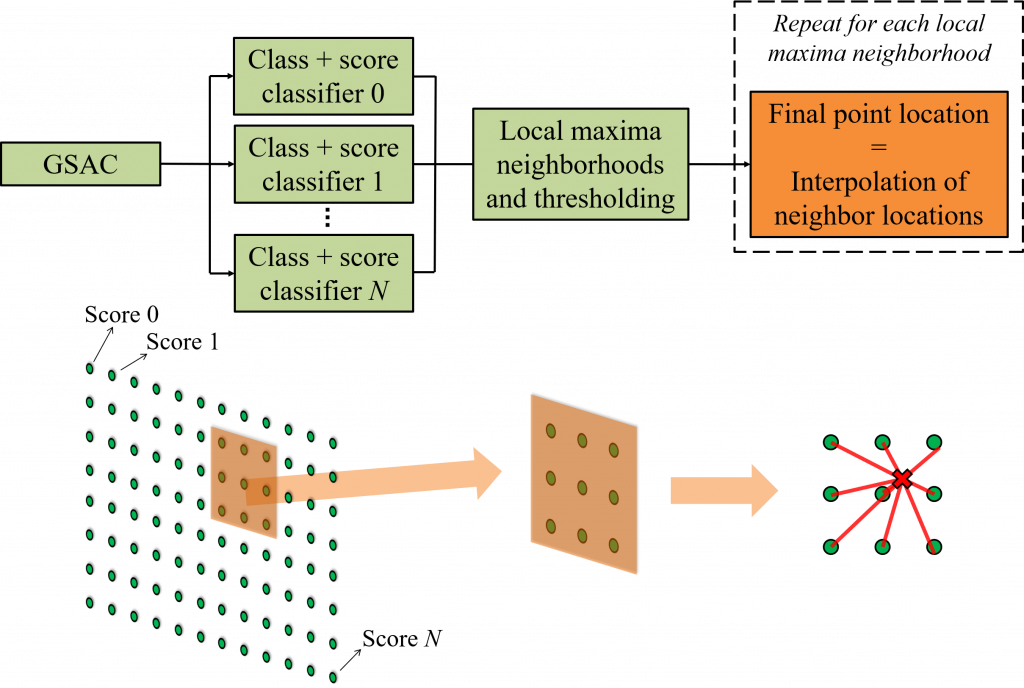

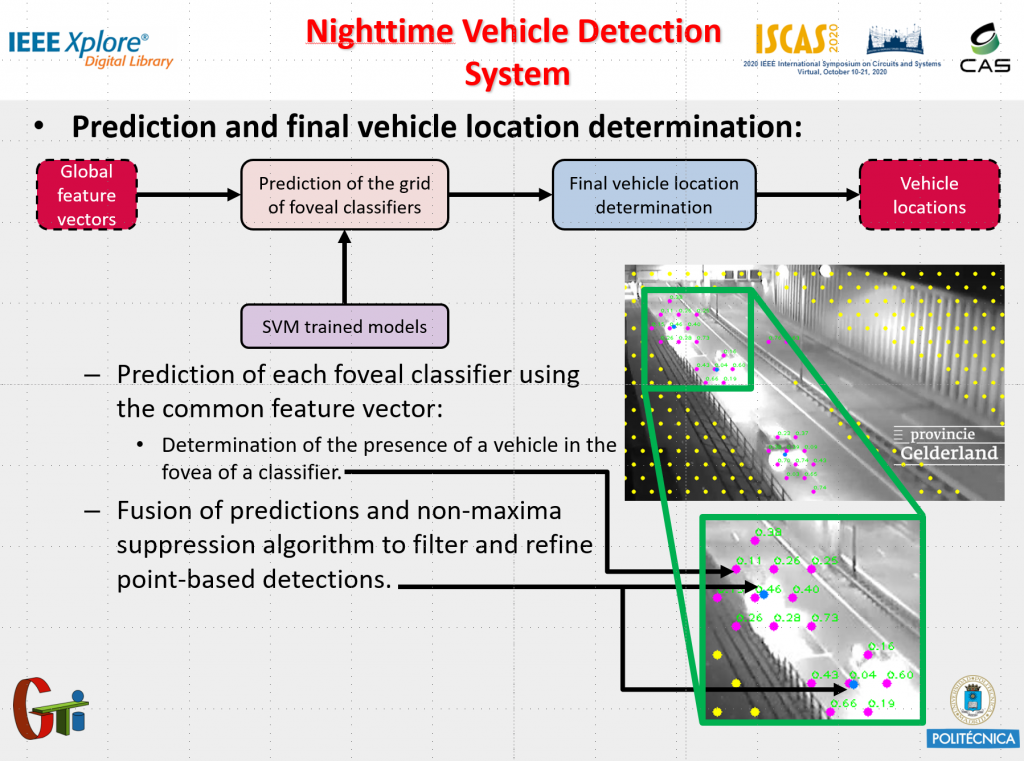

New publication: Robust Nighttime Vehicle Detection Based on Foveal Classifiers

We have just published a new article called: “Robust Nighttime Vehicle Detection Based on Foveal Classifiers”. You can find it in [Link], where you can download the paper and watch a video about it. See our presentation below.

Abstract:Visual vehicle surveillance has become an important research field due to its wide range of traffic applications. This task becomes more relevant in nighttime because accidents considerably increase. Typically, this problem is addressed by segmenting the bright image regions produced by vehicle lights, assuming they are well defined. But, often there are only flashes that occupy large image regions, invalidating the previous strategy. Thus, a real-time vehicle detection algorithm for nighttime that addresses the previous challenge is presented. First, the whole image is characterized by only one descriptor. Then, a grid of foveal classifiers that share the same previous image descriptor (unlike the traditional sliding window scheme) estimates the vehicle positions. Every classifier is trained to detect vehicles in specific image regions by analyzing the complex light patterns in the night. Furthermore, a new nighttime database has been also created to assess the effectiveness of the proposed method.

Video demos

Image annotation tool for machine learning

VGG Image Annotator (VIA) is an image annotation tool that can be used to define regions in an image and create textual descriptions of those regions. VIA is an open source project developed at the Visual Geometry Group and released under the BSD-2 clause license.

Here is a list of some salient features of VIA:

- based solely on HTML, CSS and Javascript (no external javascript libraries)

- can be used off-line (full application in a single html file of size < 400KB)

- requires nothing more than a modern web browser (tested on Firefox, Chrome and Safari)

- supported region shapes: rectangle, circle, ellipse, polygon, point and polyline

- import/export of region data in csv and json file format

- supports bulk update of annotations in image grid view

- quick update of annotations using on-image annotation editor

- keyboard shortcuts to speed up annotation

[More]

[Spanish] Ganadores a la mejor idea de negocio de actúaupm

Estamos orgullosos de ser uno de los 10 ganadores de la competición de emprendimiento y empresas Actúaupm. Nuestra idea ganadora se llama:

Espacios 2.0 para el comercio y la empresa: algoritmos basados en visión artificial y aprendizaje profundo para el procesamiento de imágenes, incrementando la productividad y seguridad de espacios comerciales y empresariales.

Más información en: http://actuaupm.blogspot.com.es/2018/04/premios-y-diplomas-las-mejores-ideas-de.html